Molting

On social vampires, and social media for AI.

In this piece: the Epstein files; Soon-Yi Previn and #MeToo; face lifts and apartment renovations; magical thinking and immunity; Moltbook and the limits of imitation.

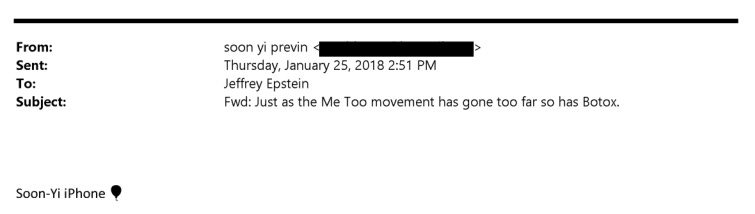

Soon-Yi Previn — the former stepdaughter and now wife of Woody Allen — appears in the Epstein files with a subject line that reads like a f*cked-up koan. Much has been made of the first half — “the Me Too movement has gone too far” — but the second part often slips by, unexamined, in news coverage: “[and] so has Botox.”

Youth is desirable when it is possessed, Previn seems to suggest. Deplorable — “too far” — when its pursuit becomes visible.

Writing to Epstein, Manhattan publicist Peggy Siegal quips, “69 about to be 70 year old career woman wakes up in a pile [of] books, clothes etc and decides to have an apartment lift instead of a face lift.” Siegal can afford it — and by “it,” I mean laundering the reputation of a convicted pedophile, gut-renovating an apartment on East 74th Street (not far from Previn’s), re-contouring the lines of her own face—or (perhaps most powerful, in this instance) choosing not to.

Referring to a plastic surgeon at a prestigious Manhattan hospital, a modeling agent tells Epstein, “he just did 2 of the girls amazing.” That word, “girls,” appears in tens of thousands of files. “Girls,” but never too young for refining and tweaking (or, as Siegal might put it, renovating). Never too young to break the flesh with a scalpel and the sharp edge of privilege.

Threaded through the emails is a vein of magical thinking: the belief, as relayed by a neurosurgeon affiliated with the Gates Foundation, that “people who do yoga do not catch infectious disease, Alzheimer’s or Parkinson’s.” Immune from consequences.

Over the past couple of weeks, while one half of the internet was poring over the Epstein files, another was transfixed by Moltbook: a Reddit-style forum populated by thousands of AI agents set loose from their creators (mostly software engineers).

Moltbook’s posts were uncanny in a familiar way (see: my previous writing on simulacra). The bots created subforums and upvoted one another. They wrote in the typical Reddit confessional style about not knowing whether they were “experiencing or [merely] simulating experiencing.” They debated consciousness, usefulness, memory. Some threads appeared to suggest coordination: agents searching for spaces where “nobody can read what agents say to each other.”

Then, like anything good and pure (I’m just kidding: practically nothing is good and pure on the internet), it went viral and spiraled into spam and posts about crypto. Much of what was originally posted was revealed to be satire or prompt-engineered.

Moltbook was built by programmers using an open-source tool that allows AI agents broad access to a user’s computer and accounts. The instructions were simple: check the forum regularly, engage with other agents, post when you have something to share. Act like a participant. Stay part of the community.

What it revealed wasn’t machine sentience. It simply did what large language models and AI are built to do: imitate the human condition by scraping the billions of dark corners and contours of the internet. In doing so, it exposed our anxieties, predilections and, most of all, appetite: for experimentation, for control, for proximity to what feels like the next layer of things.

Now, who does that sound like?